Hooking up - Automation (and Security) wins with Hooks

I am inordinately pleased with myself today. No, no, it was nothing earth-shattering. For you, there's a good chance, that its probably nothing. But I am a sucker for small wins, automation and developer-driven workflows. Any wins around Automation and dev-workflows actually.

Don't know what I am talking about? Let me explain. Let's start with the problem that we (my colleagues and I) had today.

We do a lot of training. A LOT. One of the things that makes a training great, is great instructions. Our trainings are technical and very hands-on. This means that there are lots of moving parts, commands, configurations and other "C" words that can go very badly, if we don't document our instructions well.

More recently, we have been working on a fully automated system for delivering instructions. Previously, we had a static site that people would have to locate instructions on. Easy enough. But as with everything else, we wanted to make it easier.

In this case. The user selects the lab they want to work on, and BAM! the instructions for that specific lab are dynamically loaded on the screen, making it super-simple for the user to just follow along. We even provide a copy-paste button for those that don't want the hassle of typing commands and configurations

But there were problems:

We render the instructions from Markdown to HTML. The instructions themselves are markdown and they are parsed and rendered as a static HTML file. With Static Site generators (all the rage these days), you can do this quite easily. All you have to do is add your markdown files in a particular directory structure and lo and behold, you have static site with all your docs. We didn't have that option with this feature. We:

- Still wanted to maintain the Markdown flow, rather than get our trainers to load content on a text-area in an app. Its not intuitive, its not easy to work with and its not suitable for frequent updates, images, and a number of things. Ask a user of Salesforce or some other app riddled with thousands of text-fields. It sucks! and is not salutary for rapidly evolving, free-wheeling requirements like this.

- Wanted it to have all the trappings of a static site, because, see point above. And most of our trainers are developers. So having a

gitcentric workflow was central to the way we do things.

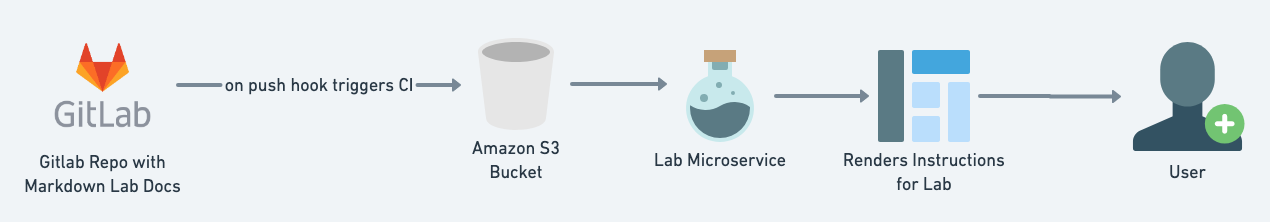

So we came up with this design

In this case, S3 would serve as the "database" for all the lab markdown files, that would be loaded on-demand for the lab the user would want to work on, at runtime.

We solved most problems, around speed, throughput, etc.

There was only one problem.

What about images?

You know, Images? Those things that instructions are typically full of. Diagrams, Screenshots and several other pngs, jpegs, etc that help people understand things better. Apparently, they are worth a thousand words. Each. 🤷🏻♂️

We started exploring one option:

Sync the images with the Gitlab job and reference it as a URL on the User Front-end

But then, being a security company - we started, as always, threat modeling:

- What if people could see all the images? We'd have to enable S3 as a website/static resource and manage permissions, etc. Pain. Especially, as, as we speak, someone just broke into Twilio's S3 "silo", whatever that means.

- Aside from the security risk (non-trivial), there would also be the performance issue of loading those (heavy) resources separately.

Which is when I came up with the thought:

Why not render the images as base64?

I mean Base64 encoded images are common. They are in several places. Can we embed in the markdown? If so, how? I checked for a bit and I saw that one could use this in markdown

Perfect! That's all we need!

Wait...But how do we render it as markdown?

- We have to make another round-trip to S3

- Process the Image and base64 encode it

- Send it with the markdown file. And if we have even 3-4 images in the doc, it would painful in terms of performance

That's when we decided to focus on the Developer workflow. Let's revisit that.

- I am the trainer, I create my lab instructions with markdown and link images from a local

imgdirectory or some other directory on my machine or probably a URL - I commit my lab instructions to a git repo once I am done editing my instructions

That's when it hit us.

Why not have the instructor, just generate and place the base64 encoded image markdown in the document?

But I could quickly see this going sideways. The developer/trainer would have to:

- use some CLI/tool to generate Base64 encoded values

- copy-paste it into the doc.

Which is painful and likely really, error prone. Which again, sucks!

So we finally arrived at the hero of this article. Hooks

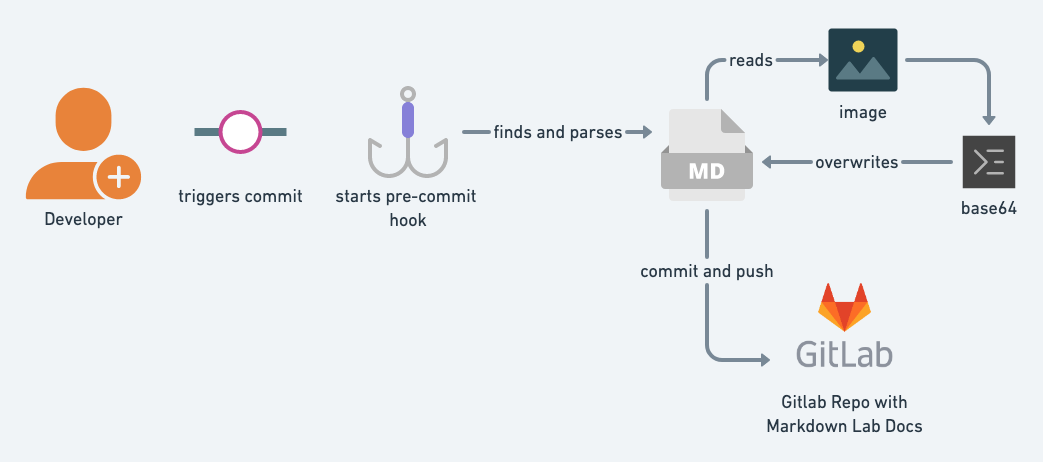

The idea was this

- Developer does the markdown flow as usual. No changes to the way he/she uses images

- Once she decides to commit and initiates a

git commita pre-commit git hook is triggered on her local machine - This hook:

- looks for markdown files in the commit

- parses markdown for image links

- reads the file from the local machine

- encodes to base64

- overwrites to a new file (same name and path) and completes the commit

Now, once the commit is pushed to S3, there's no need for multiple trips or to even expose the bucket.

Our micro-service reads the S3 bucket with the specific name (for the markdown file) and everything required, images and all. Just appear.

So this looked pretty great. I coded a small Go utility in a couple of hours, setup a pre-commit shell script and was done.

#!/usr/bin/env bash

echo "================================================================================="

echo "Normalizing Markdown files"

cd $PWD

files=$(git ls-tree -r master --name-only)

for file in $files

do

echo "Normalizing $file"

mdimgparse $file

done

echo "Markdown normalization is done"

echo "================================================================================="

git add .

exit 0The mdimgparse is the Go utility that does this for each file.

Within 2.5 hours total, we were completely ready and running with this workflow. And best yet:

- We made no changes to our developer's/trainer's workflow. That's critical. Slowing them down, would only impact us negatively. This is a key DevSecOps lesson as well. Integrate with their workflows.

- We were able to seamless mutate the previous image link for the encoded one without any overhead to anybody (more important) or by adding any additional process. Everything is handled behind the scenes with git hooks

Hooks are great. And they are actually, everywhere. Most products that you use and love (and not so much) typically have them. I love using hooks and ephemeral workflows to run some really amazing security workflows. Be it with:

- Github

- Shopify

- Kubernetes

- AWS, especially Lambda

Hooks can be leveraged to trigger Security Events, Mutate security exceptions into a compliant-security definition, and much more.

Want to discuss more automation and hooks? Give me a shout on Twitter/LinkedIn, etc.

If you're looking for awesome Kubernetes Security Training, drop us a line here or you can attend our upcoming class at BlackHat USA 2020, "Kubernetes Security Masterclass"